When I went to bed late last night, I was not completely satisfied with the

blog rant I had just written. Not that anything would be wrong with what is in there, I stand by that. But just ranting cannot be the solution - there must be some way to get what Camino people want without making UA strings suck even more.

Up to now, there are several possible ways in which people deal with websites closing out people because of UA strings they "don't recognize":

- Extend the UA string with some token they recognize. As said in the other post, this sucks. A lot.

- Evangelizing. Find out what kind of errors the site is making with browser detection, mail the webmasters, tell and help them to improve the situation. That's hard, manual work, often with very little reward, but where it helps, it makes the web better for everyone.

- Selective UA spoofing on the user side. Extensions like PrefBar give you a handy dropdown menu to spoof certain well-known UA strings when accessing websites, and a good way to switch back to the default. This is handy for experts, but normal users don't understand it. Additionally, the accessed websites don't even see your browser in any stats - and there's a high risk that you hide your real browser in more occasions than necessary.

All those variants suck in some way and none strikes out as a good solution - not even evangelizing, which is good and noble but just doesn't work in enough cases.

But then, I realized, all those solutions are so 1990s, so static, so Web 1.0 - and we're all talking in terms of the modern, new, shiny, dynamic Web 2.0 all the time. So maybe that great new world may have a better solution for that problem as well. And actually, I think it really has. I began to think we could just combine all the methods above with some other tooling we have, make everything dynamic, and we have a cool, new approach!

Firefox has this nice feature of preventing phishing through lists of known phishing sites it dynamically updates from the web. Currently, there's a plan to do a very similar thing for completely blocking sites that offer malware in Gecko.

So, what about having a list of sites that need UA spoofing, dynamically maintained by our users, and dynamically downloaded and used by the web browser? That way, we would specifically only spoof our UA (by adding any token the site needs) on specific websites. The list would have the domain name where spoofing is needed along with what sort of spoofing, the client would follow those rules.

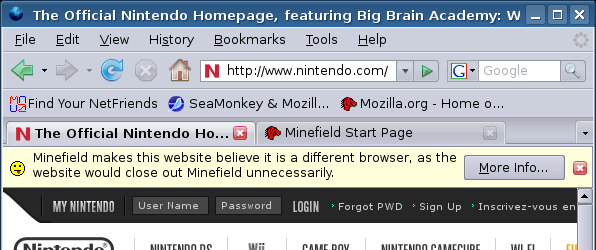

Of course, it's bad to do this automatically without telling the user that we are doing non-standard things - after all, the website might tell the user he is using Firefox even though he's using Camino. But then, there's this nice idea of info bars in the browser, which we could use for giving the user feedback about what's happening:

This is just a graphical mockup of how it would look, of course - I haven't implemented anything.

The "More Info..." button would open a new tab/window (depending on user prefs) with a page that carries all kind of info about our spoofing of UA strings on this website. The user can leave his comments there, find a contact where to nag the webmaster about this problem, etc. Of course, this is a page on our central site that also delivers the dynamic list and where our users can add spoofing for sites, change spoofing options for those sites, and similar things. This should be driven by the community and should combine the reporting system with evangelizing options.

Of course, several points are still open in this concept:

- The (advanced) user needs a possibility to opt out of spoofing for the site (for testing)

- The (advanced) user needs to be made aware of how to report pages in the first place

- Perhaps the user needs an option to mute the warning for pages he visits very often?

- and lots of others...

And last but not least:

Someone needs to implement this system.

I'm willing to help with bits and pieces where I can, but I'm not a big XUL hacker and I have very little time to work on yet another new project.

It would be very cool to find someone who could implement a system like that.

If we design it well, it can help lots of browsers, not only Minefield, Camino and SeaMonkey, but also any number of others who could implement the same system.

Actually, we may even be able to leverage that system for going back to really short and useful UA strings for all our browsers, including even Firefox. Who knows?